Submitted URL Has Crawl Issue

Do you see the “Submitted URL has crawl issue” error in Google Search Console?

Looking at the Coverage Report can you see any errors?

This article will show you how to fix this issue.

The “Submitted URL has crawl issue” is the most difficult of the coverage errors to resolve. There are no clear guidelines on what to do.

Yet, we will look at some of the common causes and how you can fix them.

If you understand what the coverage report is, then skip to the “URL Inspection tool” section.

If not, let’s take a look at the coverage report and what it shows you.

Coverage Report

Google crawls your website so that it can add your pages to the Google Index. A Google Indexed page will then appear in the Google Search results.

The coverage report allows you to see which pages Google has indexed. It also shows you any pages that have crawl errors or issues.

There are four sections of the coverage report:

- Errors - Google has been unable to crawl the page.

- Valid with warnings - Google has crawled the page. Yet, the page has an issue that you need to resolve.

- Valid - Google has crawled the page and added it to the index.

- Excluded - Google has crawled the page. Yet, the page or robots.txt is blocking the index.

Next, let's dive into the types of errors that the coverage report will give us.

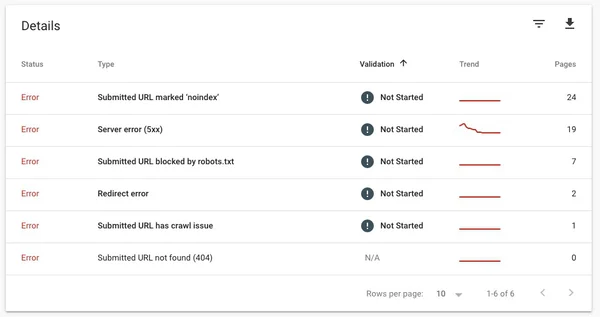

Coverage Report Errors

Any page that has caused an error is not added to the Google Index.

When Google finds a page with an error you need to fix it.

There are eight types of errors that the coverage report will show:

Server error (5xx) - This means that your web server failed to return the webpage and instead sent Google an error.

Redirect error - Sometimes you must redirect a URL. Either because the old page is no longer available or the content has moved. There are times when redirects become “chained”. This means that one URL redirects to the next, which redirects to the next. When these chains get too large you will see this error.

Submitted URL blocked by robots.txt - This one is straight forward. You have blocked the page via the robots.txt but have not added noindex or you have also included it in your sitemap.

Submitted URL marked noindex - You have added the URL to the sitemap or asked Google to add to the index. Yet, the page declares noindex either with a tag or an HTTP header.

Submitted URL seems to be a Soft 404 - You have submitted this URL to the index but the page is returning a web page not found and an OK HTTP status (a Soft 404). A Soft 404 is where the HTTP status code is a 200 (meaning success). Yet, the page content shows “not found”.

Submitted URL returns unauthorized request (401) - Webpages behind a login window will return a 401 when you are not logged in. If you submit a URL to google that requires a user to login you will see this error.

Submitted URL not found (404) - You have submitted a URL to the index. Yet, the page is returning a 404 (“not found”) HTTP status code.

Submitted URL has crawl issue - Google found an error but that error did not fall into any of the above categories. This is a catch-all for all other types of errors.

As you can see the “Submitted URL has crawl issue” error is the most generic. Google suggests we use the URL Inspection tool to fix it.

Let’s take a look at this tool next.

URL Inspection tool

To get more information about what happened during the crawl of a page we need to use the “URL Inspection tool”. This tool is found on the menu within Google Search Console.

To use this tool select it from the Search Console Menu.

You will need to enter the URL of the page with the error. This tool will work for both AMP and non-AMP pages. If the page has links to hreflang or canonical content. It will also give feedback about any alternate or duplicate content.

Once you enter a URL Google will fetch the last crawl report.

The results returned are not of the live page. The results are from the most recent crawl information that Google has cached.

To find out when the page was last indexed expand the coverage section. You will see the “Last crawl” date.

If you have made changes to the page after this date then you can use the “Test Live URL” button to re-crawl. This will allow you to check if the fix has worked.

Using the “Test Live URL” will fetch the webpage in real-time and check to see if there are any errors.

This is the very first thing to do when trying to diagnose the “Submitted URL has crawl issue” error. As very often there can be an issue with the page during the crawl and now it is fixed.

When Google does a live test on the page they do not check the sitemap or referring pages. So the results of this live test may be different from the Google crawl.

If you get a successful crawl on the live test then you can click the “Request indexing” button.

This will let Google know that they can index the page and schedule a crawl.

This can take a week or two to complete before you get the results.

If you are still getting an error when crawling the live URL then check the next section for a list of common causes.

Common Causes of the Error

Here is a list of the 5 common causes of this error:

-

You have pages that are returning a 404 yet the sitemap has the page listed. Either remove the page from the sitemap or fix the 404.

-

You have assets in your sitemap such as images, videos, pdfs, etc. Google cannot crawl assets. Remove them from your sitemap.

-

You have pages in your sitemap that redirect to images. Remove any pages from your sitemap that redirect to assets.

-

Your page had a crawl error but is now OK and the coverage report has not updated. (It can sometimes take a few days for the coverage report to update).

-

You have removed the pages and are returning a 410 (“Gone”) HTTP status. There is a lag between the crawl by Google and the removal of the page from Google search. During this time you will see an error.

Once fixing the issues listed above, use the URL Inspection tool to test that the page is working. Once you have tested you can request indexing.

If you discover a new cause that is not listed above then please leave a comment below.

Wrapping Up, Submitted URL Has Crawl Issue

The coverage report from Google Search Console gives you feedback on any errors on your site. You can view the most recent Google crawl data by using the URL Inspection tool.

Using this tool we can see any errors that have happened during the crawl.

Any errors found by the Google crawl will stop the page from appearing in Google search.

So we want to get this fixed as soon as possible.

There are eight types of errors that Google will find.

The hardest to fix is the “Submitted URL has crawl issue”. This is a catch-all for any other error. Google gives us very little information on how to fix the error.

Instead, we must turn to the “URL Inspection” tool. Compare Google's cached version of the page with the live version.

If the live version has no errors then we can request to re-index the page.

This can take between one and two weeks to complete.

If the live version also returns an error then work through the common causes and re-test.

Once Google has crawled the page it will start to appear in Google Search results.